Development Tools for Embedded Vision

ENCOMPASSING MOST OF THE STANDARD ARSENAL USED FOR DEVELOPING REAL-TIME EMBEDDED PROCESSOR SYSTEMS

The software tools (compilers, debuggers, operating systems, libraries, etc.) encompass most of the standard arsenal used for developing real-time embedded processor systems, while adding in specialized vision libraries and possibly vendor-specific development tools for software development. On the hardware side, the requirements will depend on the application space, since the designer may need equipment for monitoring and testing real-time video data. Most of these hardware development tools are already used for other types of video system design.

Both general-purpose and vender-specific tools

Many vendors of vision devices use integrated CPUs that are based on the same instruction set (ARM, x86, etc), allowing a common set of development tools for software development. However, even though the base instruction set is the same, each CPU vendor integrates a different set of peripherals that have unique software interface requirements. In addition, most vendors accelerate the CPU with specialized computing devices (GPUs, DSPs, FPGAs, etc.) This extended CPU programming model requires a customized version of standard development tools. Most CPU vendors develop their own optimized software tool chain, while also working with 3rd-party software tool suppliers to make sure that the CPU components are broadly supported.

Heterogeneous software development in an integrated development environment

Since vision applications often require a mix of processing architectures, the development tools become more complicated and must handle multiple instruction sets and additional system debugging challenges. Most vendors provide a suite of tools that integrate development tasks into a single interface for the developer, simplifying software development and testing.

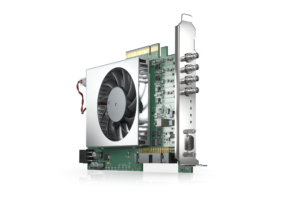

Basler Presents a New, Programmable CXP-12 Frame Grabber

With the imaFlex CXP-12 Quad, Basler AG is expanding its CXP-12 vision portfolio with a powerful, individually programmable frame grabber. Using the graphical FPGA development environment VisualApplets, application-specific image pre-processing and processing for high-end applications can be implemented on the frame grabber. Basler’s boost cameras, trigger boards, and cables combined with the card form a

Why CLIKA’s Auto Lightweight AI Toolkit is the Key to Unlocking Hardware-agnostic AI

This blog post was originally published at CLIKA’s website. It is reprinted here with the permission of CLIKA. Recent advances in artificial intelligence (AI) research have democratized access to models like ChatGPT. While this is good news in that it has urged organizations and companies to start their own AI projects either to improve business

LLMs, MoE and NLP Take Center Stage: Key Insights From Qualcomm’s AI Summit 2023 On the Future of AI

This blog post was originally published at Qualcomm’s website. It is reprinted here with the permission of Qualcomm. Experts at Microsoft, Duke and Stanford weigh in on the advancements and challenges of AI Qualcomm’s annual internal artificial intelligence (AI) Summit brought together industry experts and Qualcomm employees from over the world to San Diego in

More than 500 AI Models Run Optimized on Intel Core Ultra Processors

Intel builds the PC industry’s most robust AI PC toolchain and presents an AI software foundation that developers can trust. What’s New: Today, Intel announced it surpassed 500 AI models running optimized on new Intel® Core™ Ultra processors – the industry’s premier AI PC processor available in the market today, featuring new AI experiences, immersive graphics

Moving Pictures: Transform Images Into 3D Scenes With NVIDIA Instant NeRF

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. Learn how the AI research project helps artists and others create 3D experiences from 2D images in seconds. Editor’s note: This post is part of the AI Decoded series, which demystifies AI by making the technology more

2024 Embedded Vision Summit Showcase: Keynote Presentation

Check out the keynote presentation “Learning to Understand Our Multimodal World with Minimal Supervision” at the upcoming 2024 Embedded Vision Summit, taking place May 21-23 in Santa Clara, California! The field of computer vision is undergoing another profound change. Recently, “generalist” models have emerged that can solve a variety of visual perception tasks. Also known

2024 Embedded Vision Summit Showcase: Expert Panel Discussion

Check out the expert panel discussion “Multimodal LLMs at the Edge: Are We There Yet?” at the upcoming 2024 Embedded Vision Summit, taking place May 21-23 in Santa Clara, California! The Summit is the premier conference for innovators incorporating computer vision and edge AI in products. It attracts a global audience of technology professionals from

2024 Embedded Vision Summit Showcase: Qualcomm General Session Presentation

Check out the general session presentation “What’s Next in On-Device Generative AI” at the upcoming 2024 Embedded Vision Summit, taking place May 21-23 in Santa Clara, California! The generative AI era has begun! Large multimodal models are bringing the power of language understanding to machine perception, and transformer models are expanding to allow machines to